In a bid to enhance the user experience for owners of GeForce RTX 30 Series and 40 Series graphics cards, Nvidia has unveiled a new tool dubbed “Chat with RTX.” This innovative tool allows users to engage in AI-powered conversations offline, directly on their Windows PCs.

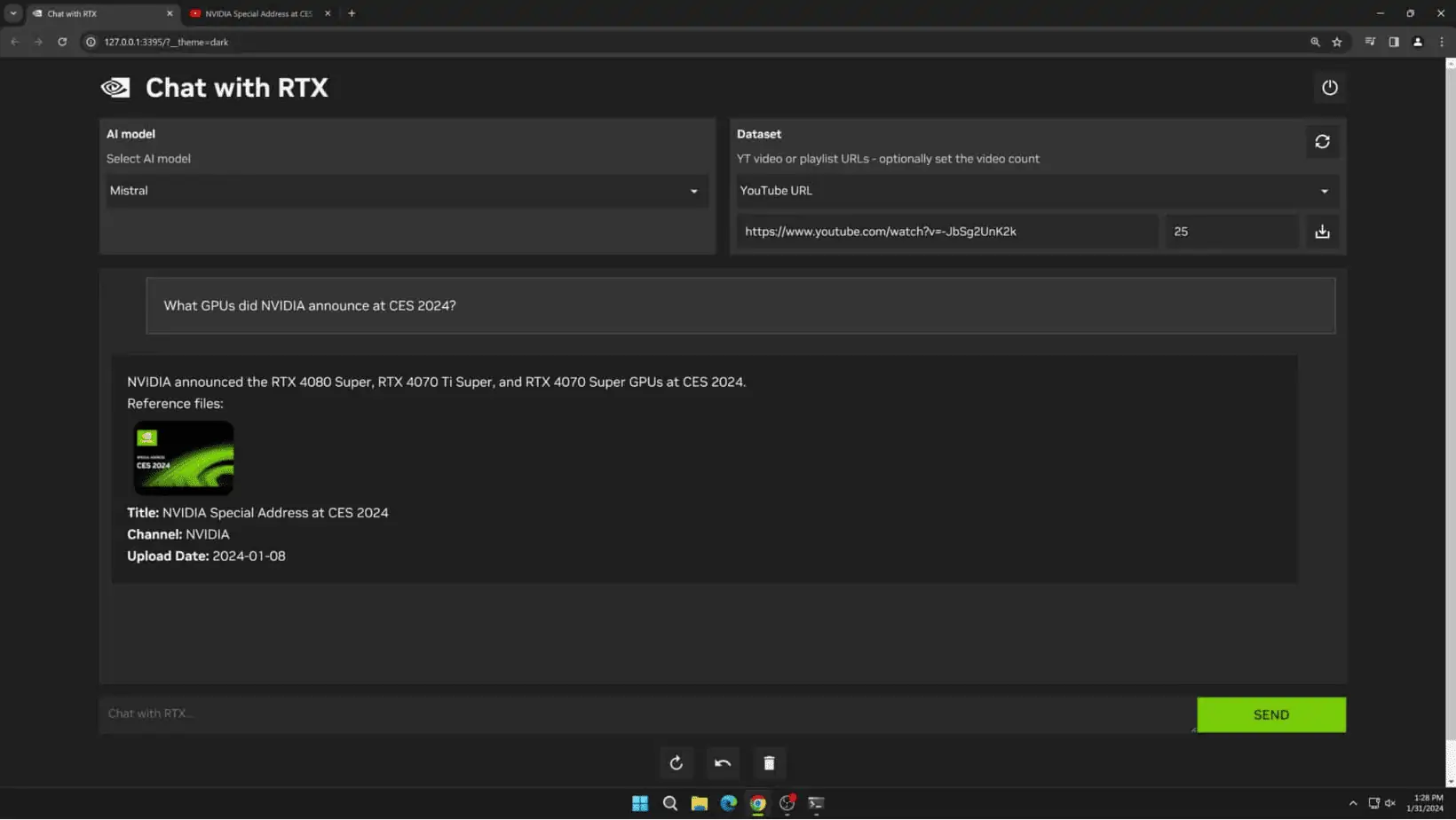

The Chat with RTX tool leverages advanced AI technology, akin to OpenAI’s ChatGPT, enabling users to interact with a customizable GenAI model. By connecting the tool to local documents, files, and notes, users can seamlessly query information without the need for an internet connection. This means users can simply type queries, and the AI-powered chatbot will scan through local files to provide relevant answers with context.

Chat with RTX comes equipped with Mistral’s open source model as the default option. However, users have the flexibility to switch to other text-based models, including Meta’s Llama 2. To accommodate different preferences and requirements, Nvidia offers support for various file formats such as text, PDF, .doc, .docx, and .xml.

| Supported File Formats |

|---|

| Text |

| .doc |

| .docx |

| .xml |

Users can simply point the application to a folder containing their desired files, which will then be integrated into the model’s fine-tuning dataset. Furthermore, the tool can process YouTube playlist URLs, loading transcriptions of videos within the playlist for querying.

While Chat with RTX offers an exciting opportunity for offline AI interactions, it’s essential to consider its limitations. The tool does not retain context between questions, meaning it cannot remember previous inquiries when answering subsequent ones. Additionally, response relevance may be influenced by factors such as question phrasing, model performance, and dataset size.

Nvidia acknowledges that Chat with RTX is primarily intended for recreational use rather than production-level tasks. Nonetheless, the tool represents a step towards democratizing AI capabilities for local usage.

The World Economic Forum predicts a significant increase in affordable devices capable of running GenAI models offline. This includes PCs, smartphones, IoT devices, and networking equipment. The shift towards locally run AI models offers several advantages, including enhanced privacy, lower latency, and cost-effectiveness compared to cloud-hosted alternatives.

While the democratization of AI tools raises concerns about potential misuse, proponents argue that the benefits outweigh the risks. Nevertheless, the true impact of tools like Chat with RTX remains to be seen as they become more accessible to users worldwide.

This website uses cookies.